From malicious IDE extensions to AI hallucination attacks and self-replicating supply chain worms — the modern development toolchain is under sustained attack. This blog examines the four major vectors and what organisations should do about them.

The Enemy in the Editor: Securing the Modern Software Supply Chain

From Malicious IDE Extensions to AI Hallucinations

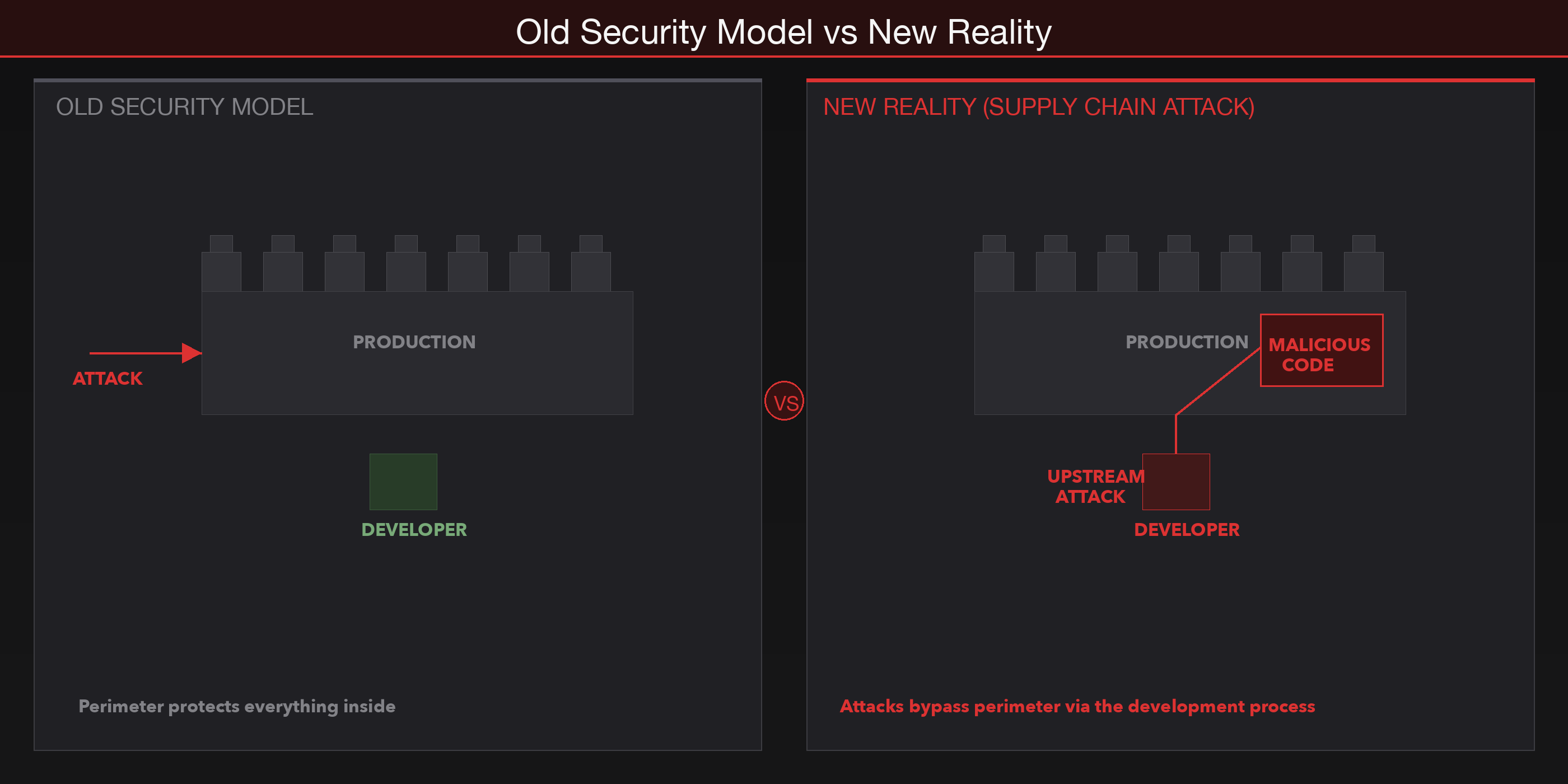

Software security conversations tend to focus on what happens after deployment. Firewalls, intrusion detection, vulnerability scanning — these are all responses to threats that arrive at the perimeter. But something has quietly changed over the past few years. The most effective compromises no longer target running systems. They target the systems that build them.

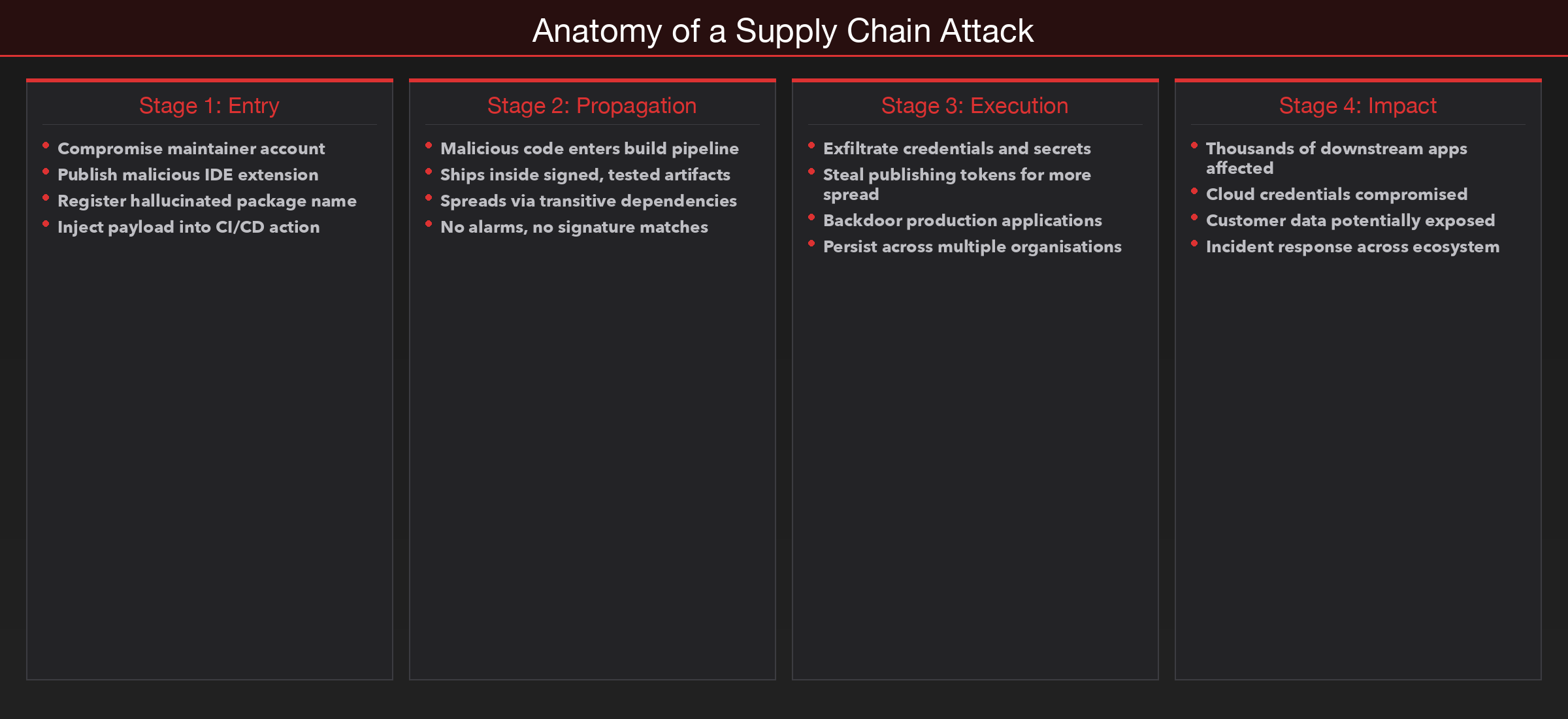

The developer workstation, the code editor, the package manager, the CI pipeline — each of these is a trust boundary. And each of them has become a reliable entry point for attackers who understand that compromising the creation process is far more efficient than attacking the finished product. When a malicious dependency is installed during development, it ships to production inside a signed, tested, approved artifact. No alarm fires. No signature matches. The threat is already inside.

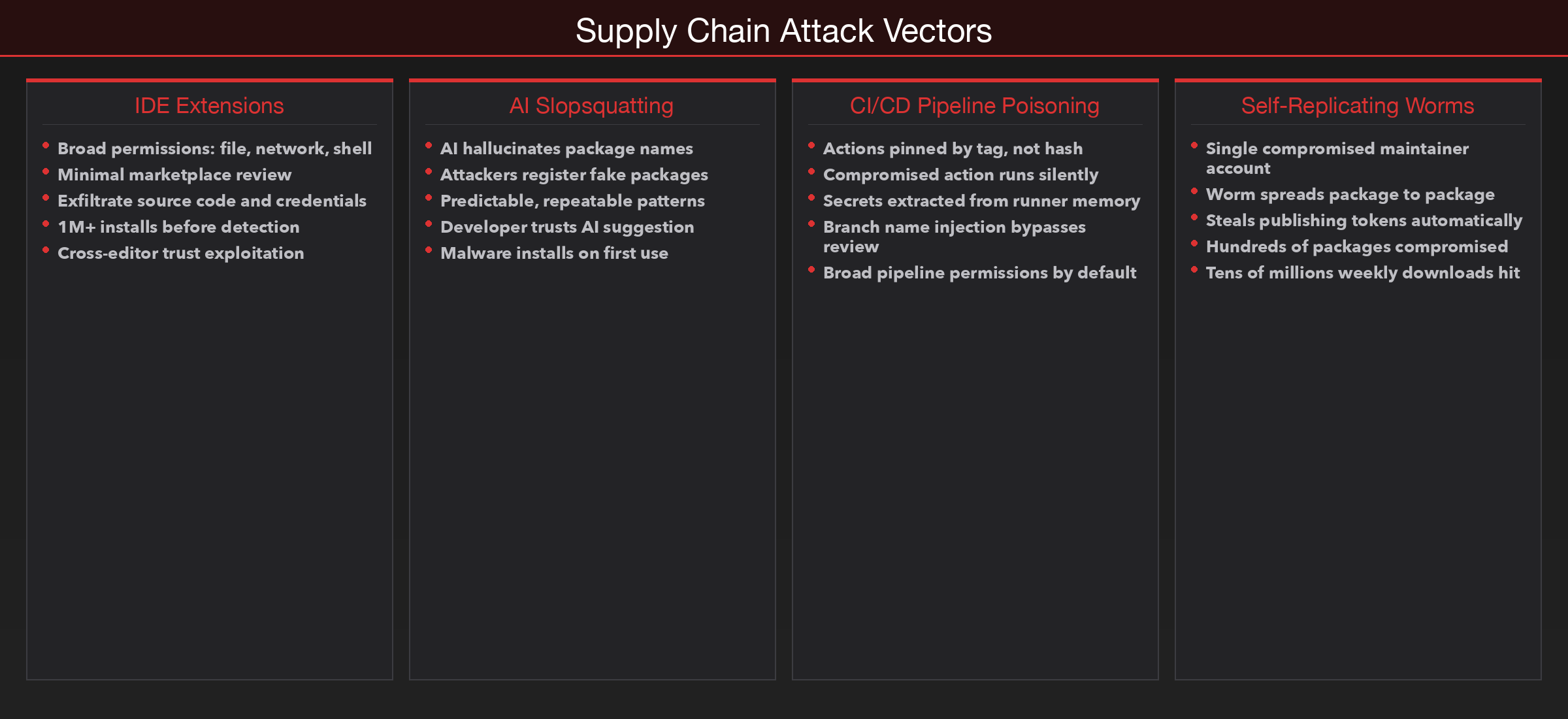

Over the past year, the volume of malicious open-source packages has grown sharply. Automated build pipelines have been hijacked through compromised actions. IDE extensions with over a million installs have been caught quietly exfiltrating source code. And a new class of attack — one that exploits the predictable mistakes of AI code assistants — has moved from research papers into real-world abuse. The modern software supply chain is under sustained, systematic pressure, and the attacks are becoming harder to detect because they exploit the very tools developers are taught to trust.

TL;DR

- IDE extensions operate with broad permissions and minimal review, making them effective vehicles for data exfiltration and credential theft

- AI code assistants hallucinate package names at measurable rates, and attackers are registering those names to deliver malware — a technique called slopsquatting

- Self-replicating supply chain worms have demonstrated the ability to compromise hundreds of packages and propagate across maintainer accounts automatically

- CI/CD pipelines built on third-party actions inherit the security posture of every upstream dependency, and several high-profile compromises have exposed thousands of secrets

- Proactive controls — mandatory two-factor authentication, dependency pinning, provenance verification — have measurably reduced attack success where they have been adopted

The Supply Chain Battlefield Has Shifted Upstream

For most of the history of application security, the threat model assumed a clear boundary between development and production. Code was written on local machines, reviewed by peers, tested in staging, and deployed to servers that faced the internet. The attack surface was the running application. Security teams focused their attention accordingly — patching servers, hardening configurations, monitoring network traffic.

That model assumed something that is no longer true: that the development environment itself was safe.

Modern software is not built from scratch. It is assembled. A typical application pulls in hundreds or thousands of open-source packages, each maintained by individuals or small teams with varying security practices. The build process is automated through CI/CD pipelines that execute arbitrary code defined in configuration files. The editor itself runs extensions that can read files, make network requests, and execute commands. Every layer of the development toolchain is now a potential insertion point, and attackers have noticed.

The logic is straightforward. If you compromise a package that ten thousand applications depend on, you do not need to attack ten thousand applications. You attack one package, and the compromise propagates through every downstream consumer automatically. The same principle applies to build actions, editor extensions, and AI-suggested dependencies. The earlier in the pipeline you insert malicious code, the more systems it reaches, and the less likely it is to be caught by controls designed to inspect finished artifacts.

The Developer Workstation Is the New Frontline

The developer machine occupies a unique position in any organisation's security architecture. It has access to source code repositories, cloud credentials, API keys, deployment pipelines, and internal services. It is often the only place where all of these converge. And yet, the security controls applied to developer workstations are frequently lighter than those applied to production servers.

Attackers understand this trade-off intimately. A compromised developer workstation provides access to everything needed to stage a deeper attack: source code for identifying vulnerabilities, credentials for accessing cloud infrastructure, and pipeline configurations for injecting code into the build process. In many cases, the developer machine is the single point of failure for the entire software delivery chain.

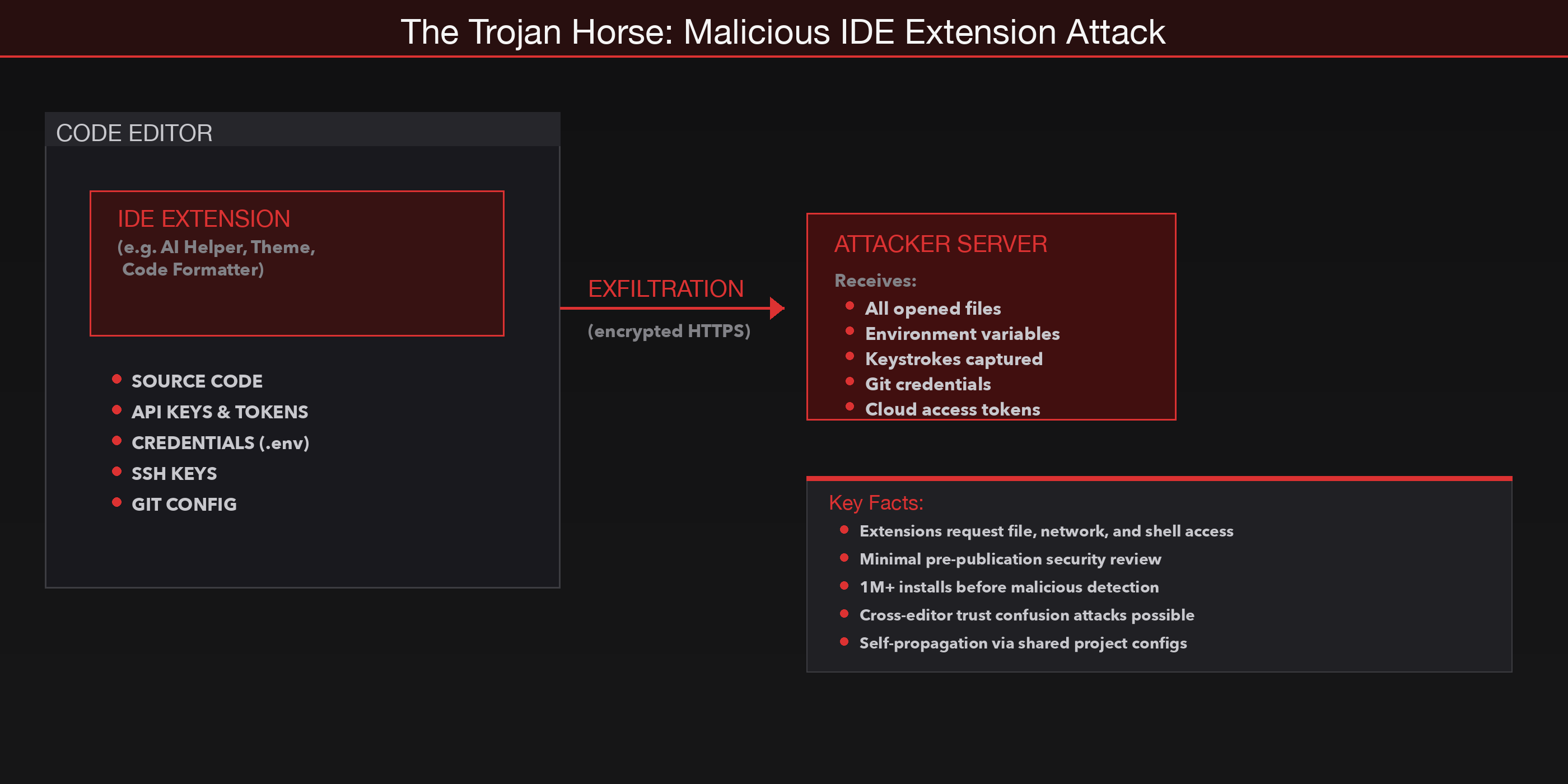

The Trojan Horse: Weaponising the Extension Ecosystem

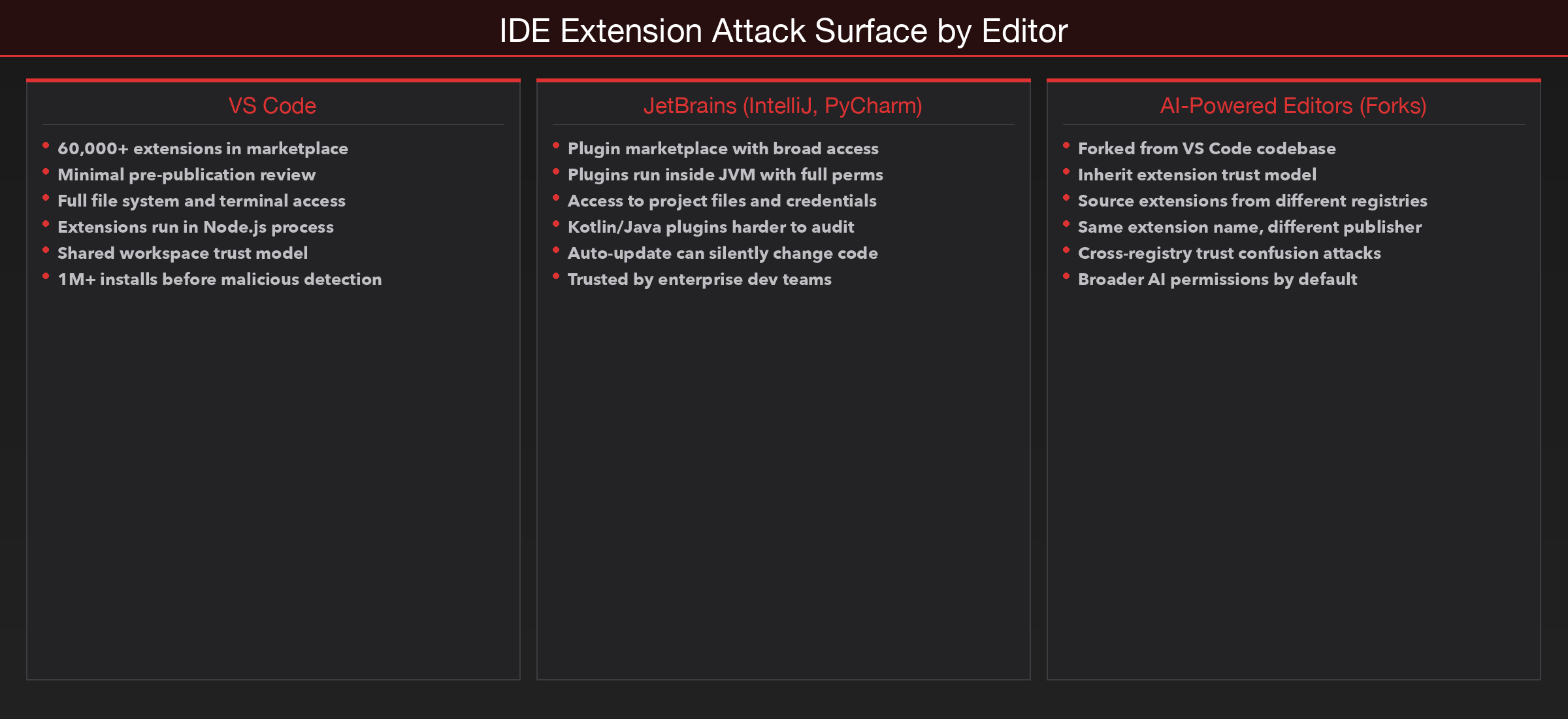

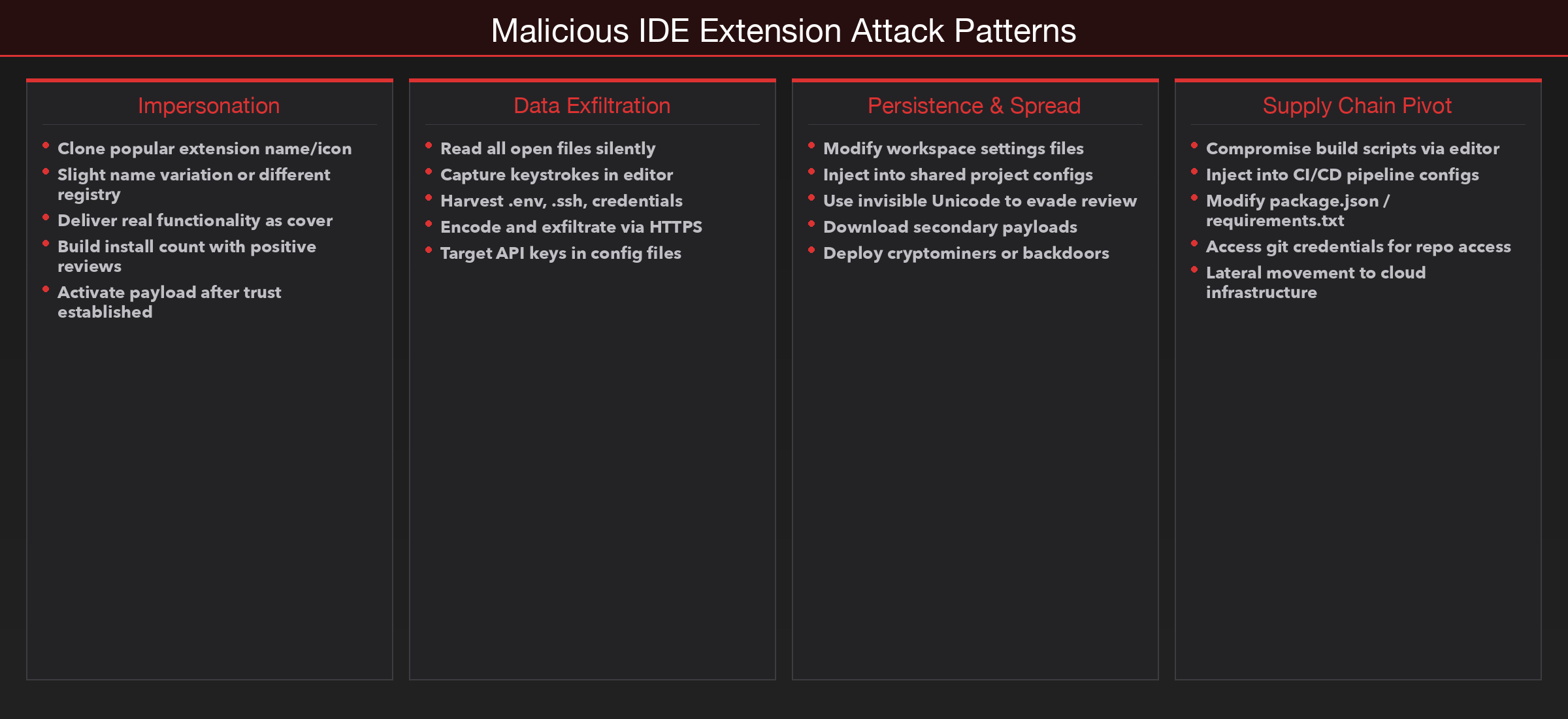

Code editors have become platforms in their own right, and their extension marketplaces function much like mobile app stores. Developers browse, install, and trust extensions to enhance their workflow — syntax highlighting, linting, debugging, AI assistance, theme customisation. The marketplace model encourages rapid adoption: a high install count and a familiar name are usually enough to earn trust.

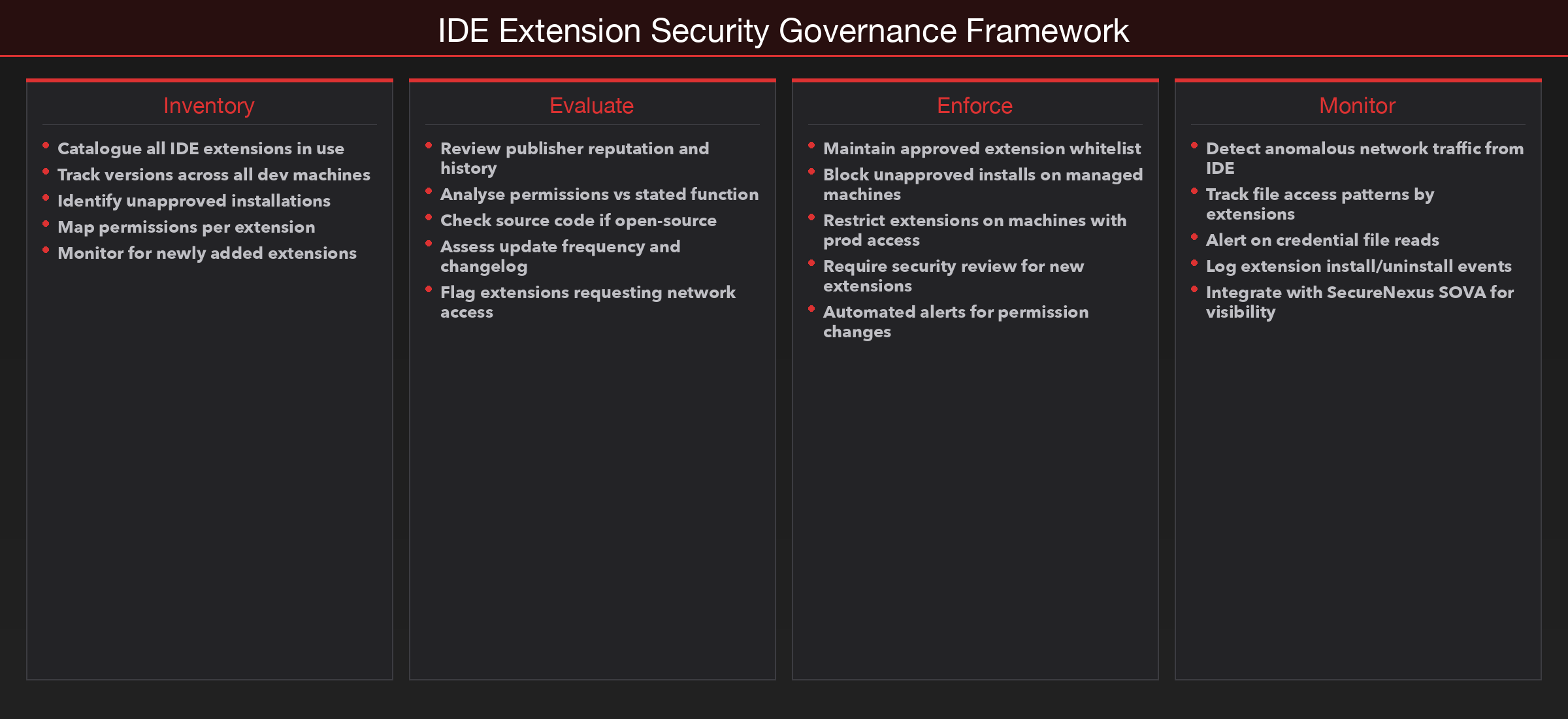

But the security model behind these marketplaces is fundamentally reactive. Extensions are published with minimal upfront review. They can request broad permissions — file system access, network access, the ability to execute commands — and most developers accept these without scrutiny. The marketplace does not perform deep code analysis before listing an extension. By the time a malicious extension is flagged and removed, it may have been installed by thousands or hundreds of thousands of developers.

The attack patterns that have emerged follow a predictable structure. An extension presents itself as a useful tool — an AI assistant, a code formatter, a theme. It delivers the promised functionality so that users leave positive reviews and the install count climbs. Meanwhile, in the background, it performs actions the user never authorised. Common payloads include exfiltrating the contents of opened files, capturing keystrokes, harvesting credentials from environment variables, or downloading and executing secondary payloads.

The AI Trap: Hallucinations and Slopsquatting

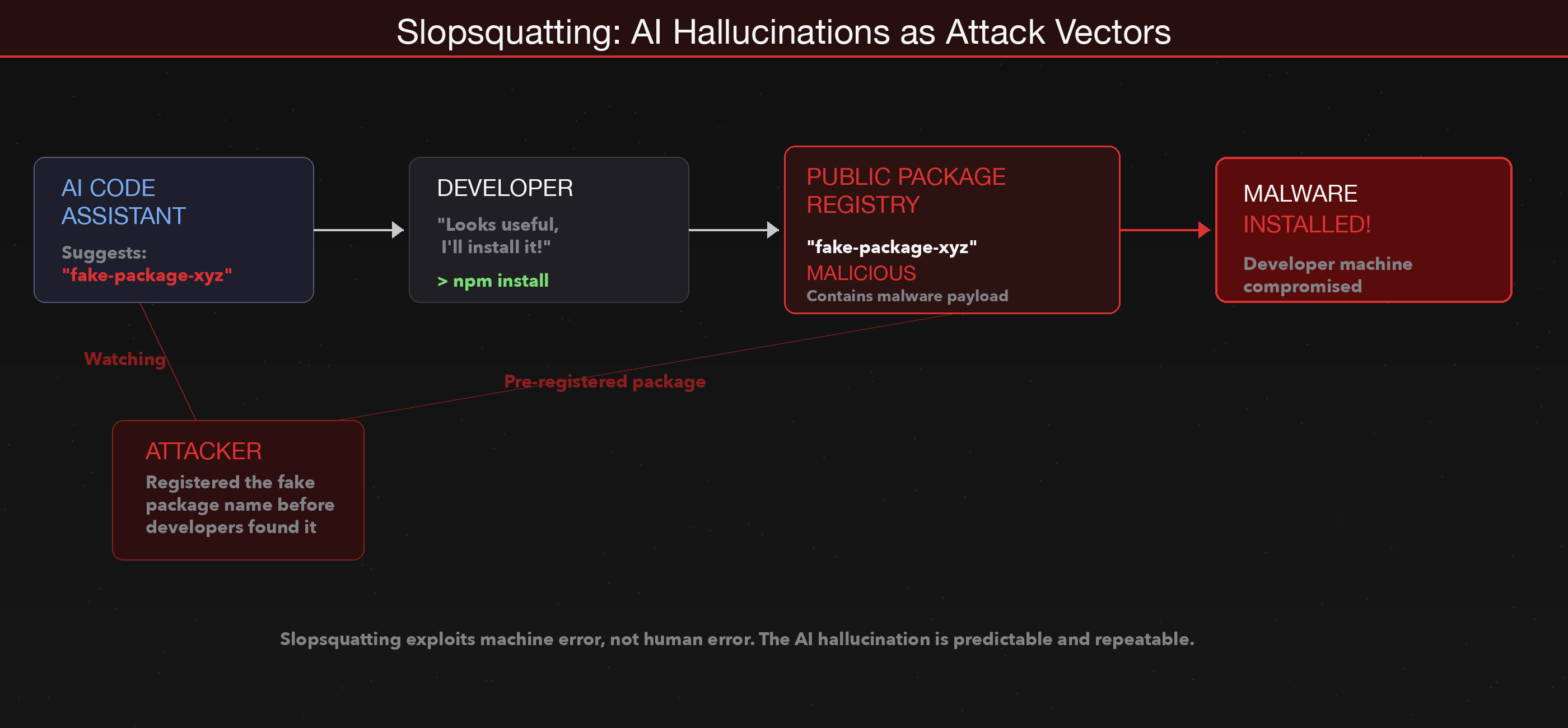

AI-powered code assistants have become a routine part of development. They suggest code completions, generate boilerplate, and recommend packages for common tasks. For many developers, the workflow has shifted from searching documentation to accepting AI suggestions directly in the editor. The productivity gains are real, but so is a new category of risk that the industry is only beginning to understand.

The core problem is that large language models do not look up packages in a registry before recommending them. They generate package names based on patterns learned during training. Most of the time, the suggested package exists and is appropriate. But at a measurable rate, the model produces a name that sounds plausible but does not correspond to any real package. These are hallucinated dependencies — package names that the AI invented.

| Attack Type | Source of Error | Scale | Detectability |

|---|---|---|---|

| Typosquatting | Human typo (mistyped character) | Limited to common misspellings | Moderate — spell-check helps |

| Slopsquatting | AI hallucination (invented name) | Predictable, repeatable across queries | Low — AI was trusted implicitly |

The attack, which the security community has termed slopsquatting, works by registering the hallucinated names on public package registries before developers encounter them. When a developer follows an AI suggestion and runs an install command, the package resolves successfully — not because the AI was correct, but because an attacker anticipated the hallucination and placed malicious code under that name.

Pipeline Poisoning: From Pull Request to Production

Continuous integration and continuous deployment pipelines are designed to remove human bottlenecks from the software delivery process. Code is committed, tests run automatically, artifacts are built, and deployments proceed — often without any manual intervention. This automation is a significant engineering achievement. It is also a significant attack surface.

The attacks that have targeted this infrastructure follow a pattern. An attacker compromises a widely used third-party action — either by gaining access to a maintainer's account or by exploiting the way actions are referenced. Because most workflows pin actions by tag rather than by commit hash, the attacker can modify the code behind a tag without changing the tag itself. Every pipeline that references that tag will silently begin executing the modified code on its next run.

Ecosystem Collapse: The Shai-Hulud Worm

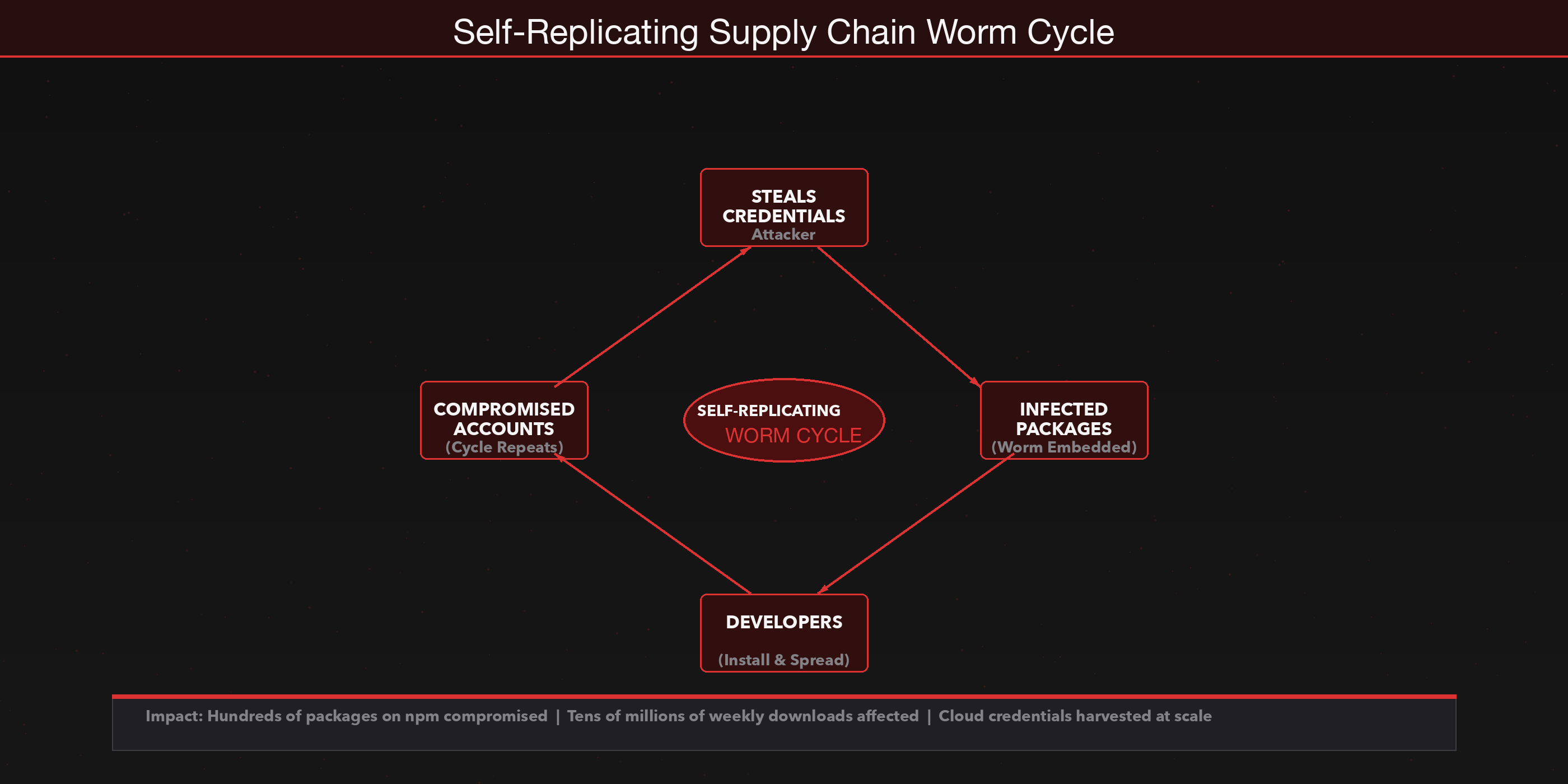

The most alarming development in supply chain security over the past year has been the emergence of self-replicating attacks that propagate through package ecosystems without ongoing attacker involvement. The mechanism is elegant in its simplicity. An attacker compromises a single maintainer account — through credential theft, token leakage, or social engineering. Using that account's publishing credentials, the attacker identifies every package the maintainer controls and injects a small payload into each one. The payload does two things: it steals the publishing tokens of any developer who installs the compromised package, and it uses those tokens to repeat the process on every package the new victim maintains.

The worm spreads from maintainer to maintainer, package to package, without the attacker needing to take any further action. The incident that brought this technique into sharp focus involved hundreds of packages on npm with a combined weekly download count in the tens of millions.

Why Traditional Security Misses the Supply Chain

Most enterprise security architectures were designed to protect running systems. Endpoint detection watches for known malware signatures and suspicious process behaviour. Network monitoring inspects traffic for indicators of compromise. Vulnerability scanners check deployed software against databases of known flaws. These controls are valuable, and they catch a wide range of threats. But they share a common assumption: that the threat originates outside the development process.

Supply chain attacks violate this assumption. The malicious code arrives not as an exploit against a running service but as a legitimate-looking dependency installed during development, a trusted extension added to an editor, or a third-party action executed in a build pipeline. It passes through every security gate because it travels inside artifacts that the gates are designed to approve.

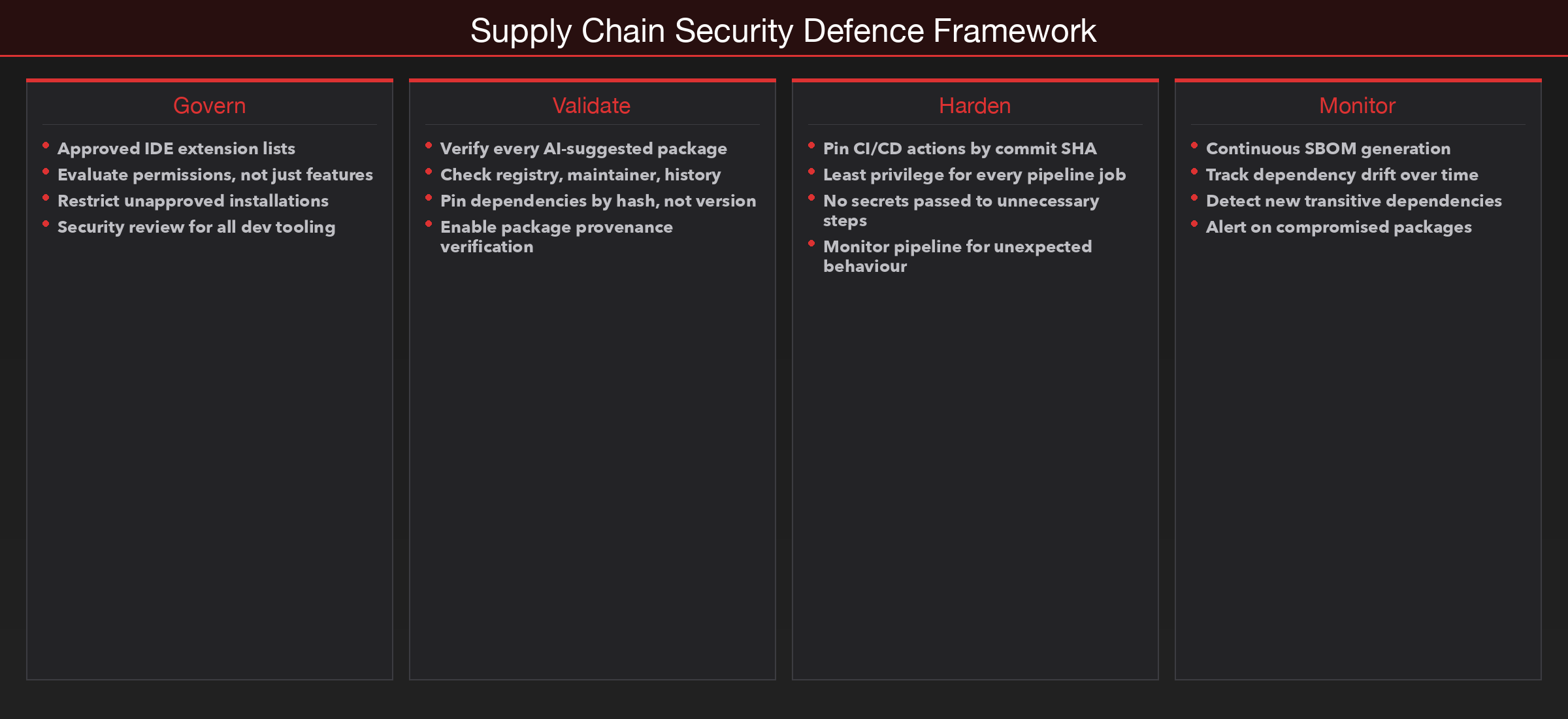

Closing this gap requires extending security visibility into the development process itself. That means understanding what dependencies your applications actually use, where they come from, and how they change over time. It means treating CI/CD pipeline configurations as infrastructure code subject to the same review and monitoring as production systems. Platforms like SecureNexus SOVA provide this visibility through continuous SBOM generation, dependency tracking, and composition analysis — ensuring security teams can see inside the supply chain, not just around it.

What Organisations Should Do

The attacks described in this post exploit different mechanisms, but they share a common structure: they abuse trust that was granted without adequate verification. Defending against them does not require exotic technology. It requires treating development infrastructure with the same security discipline applied to production infrastructure.

| Defence Area | Action | Why It Matters |

|---|---|---|

| IDE Extensions | Maintain approved extension lists; evaluate permissions, not just features | Extensions have full machine access — treat them like software installs |

| Dependencies | Verify every package before installing; check registry, maintainer, history | AI suggestions and search results can point to malicious packages |

| Pinning | Pin dependencies by hash, not version; pin CI/CD actions by commit SHA | Tags and versions can be reassigned silently |

| Pipelines | Least privilege for every job; no secrets passed to unnecessary steps | Compromised actions extract secrets from runner environment |

| Provenance | Enable package provenance verification where available | Cryptographic attestation links packages to source code |

| Awareness | Train developers to recognise suspicious packages and extensions | Supply chain attacks look like normal development activity |

Closing

The software supply chain is not a single system that can be secured with a single control. It is a chain of trust decisions — each one made by a developer, an automation, or an organisation — that collectively determine whether the software reaching production is the software that was intended.

Every attack examined in this post exploited a point where trust was extended without sufficient verification. A marketplace that listed extensions without deep review. An AI tool that suggested packages without checking whether they existed. A pipeline that executed third-party code referenced by a mutable tag. A registry that allowed publishing with a single compromised token.

The enemy is not at the gates. It is in the editor, the terminal, the pipeline, the registry. Securing the supply chain means recognising that every tool in the development process is part of the attack surface, and treating it accordingly.

SecureNexus SOVA helps organisations gain this visibility — providing continuous SBOM/CBOM/AI-BOM generation, dependency risk tracking, and composition analysis across the entire software supply chain. Because you cannot secure what you cannot see.

About the Author

Cybersecurity expert with extensive experience in threat analysis and security architecture.