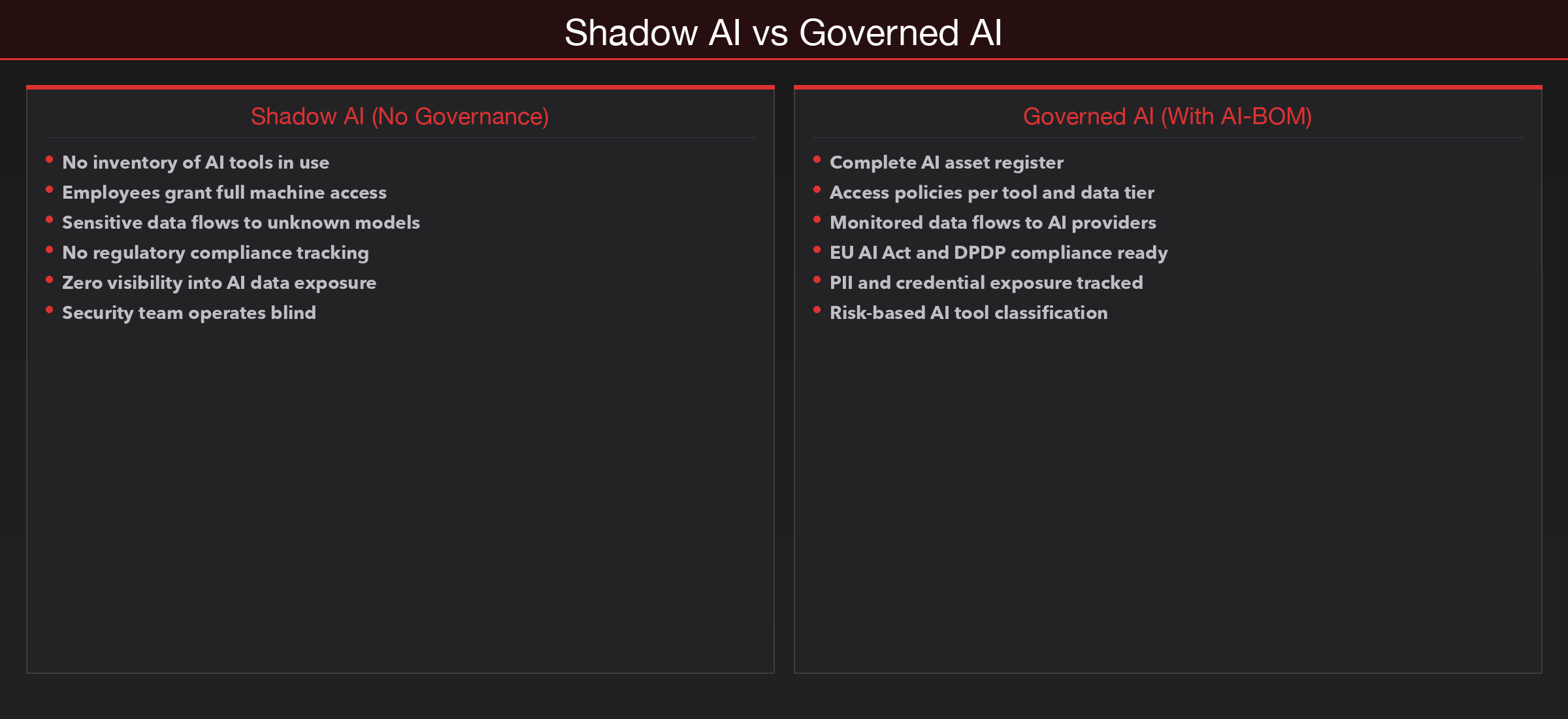

Shadow AI is spreading faster than shadow IT ever did. Employees are granting AI tools full machine access, pushing sensitive data into models, and nobody is tracking which tools are in use. Here is why AI-BOM and AI asset registers are becoming essential governance infrastructure.

Your Employees Are Feeding Sensitive Data to AI Models — And You Have No Inventory of Which Ones

The Problem Nobody Is Tracking

A developer on your platform team installs an AI coding assistant, grants it full access to the local file system, and starts feeding it production configuration files to "help debug a deployment issue." A product manager pastes an entire customer dataset into generative AI chatbot to generate a quarterly analysis. A legal team member uploads a draft acquisition agreement into an AI summarisation tool they found on Google.

None of these tools are registered anywhere. None of them went through security review. And in most organisations, nobody in the security team even knows they exist.

This is the AI governance gap — and it is widening faster than any previous shadow IT problem. The difference is the blast radius. When an employee uses an unapproved SaaS tool, the risk is typically limited to the data they upload. When an employee gives an AI tool full system access — file system, terminal, clipboard, browser history — the exposure surface is the entire machine.

The AI Adoption Problem Security Teams Cannot See

The core issue is straightforward: generative AI adoption is happening faster than governance frameworks can keep pace. Employees are not waiting for security approval. They are downloading AI-powered IDEs, browser extensions, desktop assistants, and API integrations — and they are feeding these tools with whatever data is needed to get a useful output.

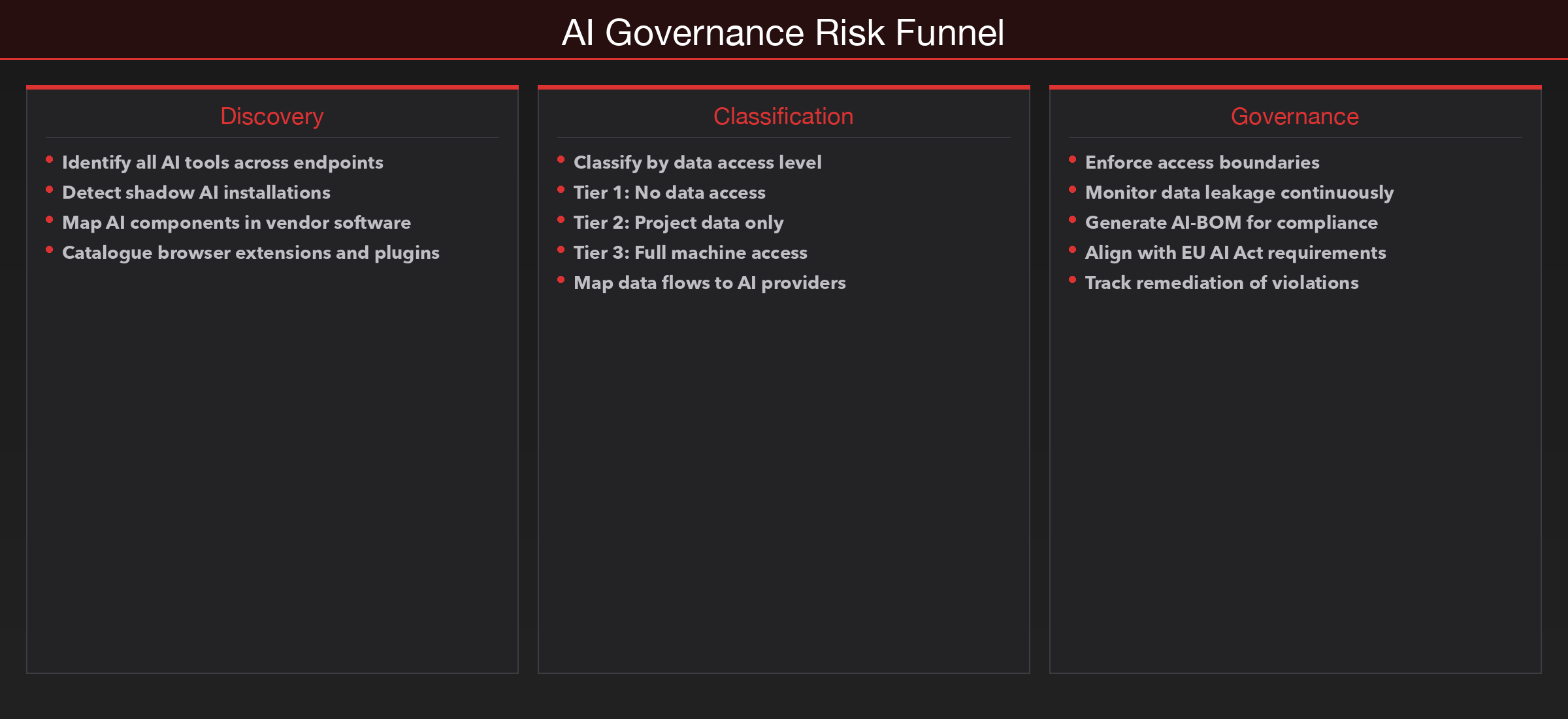

The challenge breaks down into three categories that security teams are consistently missing.

Shadow AI Is the New Shadow IT — But Worse

Shadow IT was manageable because most SaaS tools required an email signup, a credit card, and a browser. Discovery was possible through SSO logs, expense reports, and network traffic analysis. Shadow AI is harder. Many AI tools run locally — IDE extensions, CLI tools, desktop applications. They do not generate SSO events. They may not produce identifiable network traffic. Some operate entirely offline after initial setup.

The result is that most organisations have no idea how many AI tools are in use across their environment. There is no AI asset register, no inventory, no classification of which tools have access to what data. Security teams are operating completely blind.

The Full Machine Access Problem

This is where the risk escalates from "data leakage" to "complete exposure." Modern AI coding assistants — AI code completion tool, AI-powered IDE, AI coding CLI assistant, and similar tools — request broad permissions by design. They need file system access to read code. They need terminal access to run commands. Some request clipboard access, browser integration, or the ability to execute arbitrary shell commands.

When a developer grants these permissions, the AI tool can see everything on that machine: SSH keys, environment variables with database credentials, API tokens in configuration files, internal documentation, customer data in local databases, and every file the developer has ever accessed. The developer thinks they are getting a productivity boost. What they have actually done is given a third-party AI model — running on infrastructure they do not control — access to their entire working environment.

| AI Tool Type | Access Level | Data Exposure Risk |

|---|---|---|

| Browser-based chatbot (generative AI chatbot, AI assistant) | Prompt text and file uploads only | Medium — limited to what user pastes |

| IDE extension (AI code completion tool, AI-powered IDE) | Full project directory, open files | High — source code, configs, secrets in code |

| CLI assistant (AI coding CLI assistant, AI CLI tool) | File system + terminal + shell commands | Critical — entire machine exposure |

| Desktop AI app with integrations | Calendar, email, documents, browser | Critical — corporate data across systems |

Data Leakage Is Continuous, Not Episodic

Unlike a traditional data breach, AI data leakage is not a single event. It is a continuous stream. Every prompt, every file attachment, every code snippet pasted into an AI tool is a potential data transfer to a third-party system. Over weeks and months, the aggregate volume of sensitive data flowing to AI model providers can be enormous — proprietary source code, customer PII, financial projections, internal security assessments, legal documents.

No single interaction triggers an alert. But the cumulative exposure is significant.

How This Plays Out: A Fintech Platform With No AI Governance

Consider a mid-size fintech company — 400 employees, a modern cloud-native stack, and a security team that considers itself mature. They have SOC 2 certification, quarterly penetration tests, and a well-staffed security operations centre.

Over six months, AI adoption spreads organically. Engineering adopts an AI code completion tool across 60 developer machines. The data science team starts using generative AI chatbot Plus with a shared corporate account. Three product managers sign up for AI writing tools. Two DevOps engineers install an AI-powered CLI assistant that has full terminal access.

When the CISO finally commissions an audit, the findings are alarming. Fourteen different AI tools are in active use. Seven have access to production-adjacent data. Three have file system access on developer machines that also contain production SSH keys. The shared generative AI chatbot account has six months of conversation history containing customer data, internal architecture diagrams, and fragments of proprietary trading algorithms.

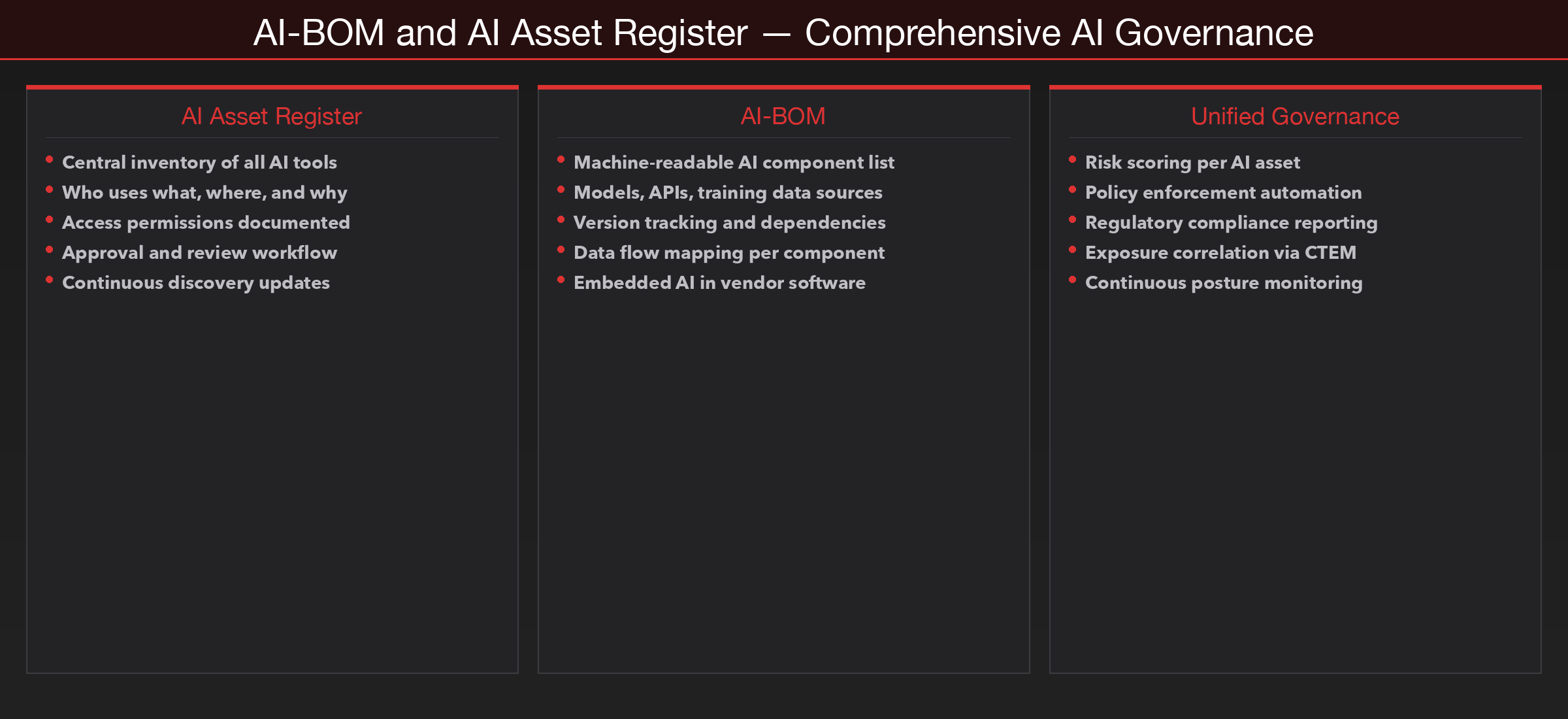

The organisation has no AI-BOM — no Bill of Materials documenting which AI components are embedded in their software, which AI services their employees use, or what data flows to which AI providers. This is exactly the kind of visibility gap that platforms like SecureNexus SOVA are designed to close. SOVA provides AI-BOM capability — a structured inventory of AI assets across the organisation, tracking which tools are in use, what access they have, what data flows to which models, and where governance gaps exist.

Building an AI Governance Framework That Actually Works

Start With an AI Asset Register

You cannot govern what you cannot see. The first step is discovering and cataloguing every AI tool, model, and integration in use across the organisation. This includes commercial AI services, open-source models deployed internally, AI components embedded in vendor software, AI-powered browser extensions, and locally installed AI tools on developer workstations.

SecureNexus SOVA provides this through AI-BOM — a machine-readable inventory of AI assets that tracks not just what exists, but what each AI component can access, what data it processes, and what governance controls apply to it.

Classify, Monitor, and Enforce

Not all AI usage carries the same risk. Classify AI tools into tiers based on data sensitivity they can access, and apply controls accordingly. Implement data flow monitoring to track what flows to AI model endpoints. Enforce the principle of least privilege — AI tools should not have blanket access to everything on a machine.

The EU AI Act, India's DPDP Act, and emerging AI-specific regulations globally are creating compliance requirements around AI asset documentation, risk assessment, and data handling. Organisations that do not have an AI asset register today will face significant compliance gaps when these regulations take full effect.

Key Takeaways

- Shadow AI is growing faster than shadow IT ever did, and it is harder to detect. Most organisations have no inventory of AI tools in use and no visibility into what data flows to AI model providers.

- Full machine access granted to AI tools is the highest-risk pattern most security teams are not monitoring. When a developer gives an AI assistant terminal and file system access, the exposure surface is the entire workstation.

- AI data leakage is continuous, not episodic. Every prompt is a potential data transfer. The cumulative exposure over months of unmonitored AI usage can be enormous.

- An AI-BOM is no longer optional. Just as SBOM brought visibility to open-source dependencies, AI-BOM provides a structured inventory of AI assets, their access levels, and their data flows. SecureNexus SOVA delivers this as part of its composition visibility platform.

- Governance frameworks must be in place before adoption reaches critical mass. Retrofitting controls after hundreds of employees are actively using ungoverned AI tools is orders of magnitude harder than establishing the framework upfront.

The Exposure Management Perspective

AI governance is fundamentally an exposure management problem. The AI tools exist. The data is flowing. The question is whether your security team can see it, measure it, and control it.

SecureNexus CTEM provides the correlation layer — connecting AI asset data from SOVA with endpoint exposure from ASM, cloud posture data from CSPM, and vulnerability intelligence — to give security leaders a unified view of AI-related risk across the organisation. When a developer workstation has an AI tool with full system access and also contains production credentials that appear in external exposure data, that is a prioritised risk that demands immediate attention.

The organisations that will manage AI adoption successfully are the ones building governance infrastructure now — not after the first incident.

Take Control of Your AI Security Posture

Ready to bring visibility and governance to your AI ecosystem? SecureNexus SOVA provides AI-BOM, AI asset registration, and continuous composition monitoring — giving your security team the inventory and control they need before the next AI-related exposure.

Explore how SecureNexus can help your organisation govern AI adoption securely:

- SOVA — AI-BOM, SBOM, CBOM & Supply Chain Visibility → Explore SOVA

- ASM — Attack Surface Discovery & Monitoring → Explore ASM

- API POS — API Discovery & Posture Management → Explore API POS

- GRC Suite — Governance, Risk & Compliance → Explore GRC

- TPRM — Third-Party Risk Management → Explore TPRM

About the Author

Yash Kumar is a Lead in Research & Innovation, focused on exploring emerging technologies and turning ideas into practical solutions. He works on driving experimentation, strategic insights, and new initiatives that help organizations stay ahead of industry trends.