How JavaScript and Mobile Crypto Creates a False Sense of Security — and Quietly Defeats Your WAF

Client-Side Encryption Is Not a Security Strategy

How JavaScript and Mobile Crypto Creates a False Sense of Security — and Quietly Defeats Your WAF

Here is a pattern that keeps showing up in penetration tests and security assessments: the development team encrypts API request payloads using AES or RSA in the browser or mobile client before sending them to the server. The stated reason is usually “security.” The encryption key, the algorithm, and the entire logic sit in a JavaScript bundle or a decompilable mobile binary. Anyone with a browser’s developer tools or a proxy like Burp Suite can extract the key in minutes.

Yet teams continue to ship this pattern, sometimes spending weeks implementing complex cryptographic routines that an attacker bypasses in a single afternoon. The real cost is not just wasted engineering time. Client-side encryption actively undermines the security infrastructure that is supposed to protect the application — most critically, the Web Application Firewall.

The Problem: Encryption as a Substitute for Fixing Vulnerabilities

The motivation behind client-side encryption usually falls into one of three categories. First, the team discovers a vulnerability — SQL injection, parameter tampering, insecure direct object references — and instead of fixing the root cause, they encrypt the request body so that “attackers can’t see the parameters.” Second, someone on the team believes that encrypting API traffic adds “an extra layer of security” on top of TLS. Third, a compliance or audit finding demands “data protection in transit,” and the team interprets this as needing application-layer encryption, even though TLS already satisfies the requirement.

In all three cases, the encryption is security theatre. The application still has the vulnerability. The parameters are still injectable. The object references are still guessable. The only thing that has changed is that now the attacker has to decrypt the payload first — using a key that was delivered to them in the client code.

| What the Developer Thinks | What Actually Happens |

|---|---|

| Attacker can't read API parameters | Attacker extracts key in minutes from JS/APK |

| WAF still protects the endpoint | WAF is completely blind to encrypted payloads |

| Extra security layer on top of TLS | No security benefit beyond what TLS provides |

| Compiled mobile code protects the key | Frida/jadx extract it at runtime |

| Obfuscation makes extraction hard | Automated tools handle obfuscation routinely |

Why Client-Side Crypto Falls Apart

The Key Distribution Problem You Cannot Solve

Cryptography’s strength comes from key secrecy. In client-side encryption, the key must be available to the JavaScript runtime or the mobile application. That means it is either hardcoded in the source, fetched from an API endpoint at runtime, or derived from values the client already possesses. In every scenario, the attacker has access to the same key. Browser developer tools, mobile reverse engineering frameworks like Frida, or simple network interception reveal the key material. Obfuscation tools delay extraction by hours, not months. Determined attackers — and automated tools — treat obfuscated JavaScript as a minor inconvenience, not a barrier.

How This Defeats Your WAF

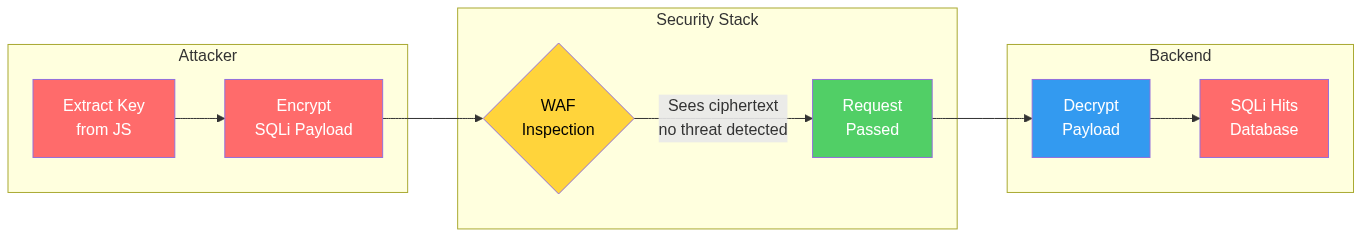

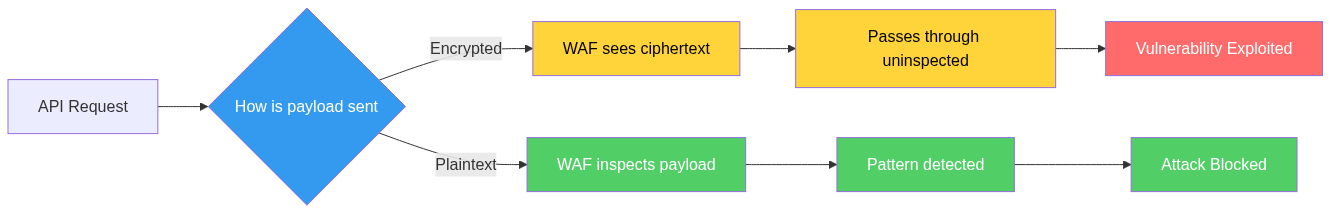

This is where the real damage happens. A Web Application Firewall inspects HTTP request and response payloads for malicious patterns: SQL injection signatures, XSS payloads, command injection strings, path traversals. When the application encrypts the request body before sending it, the WAF sees ciphertext. It cannot inspect what it cannot read.

Consider the attack flow. An application encrypts all API requests using AES-256-CBC with a key embedded in the JavaScript bundle. An attacker extracts the key, crafts a SQL injection payload, encrypts it with the same key, and sends the request. The WAF sees a Base64-encoded encrypted blob. Every signature rule, every anomaly detection model, every pattern match — all of it is blind. The encrypted payload passes through the WAF untouched and hits the vulnerable backend, where the server decrypts it and feeds the malicious input directly into the database query.

The team has effectively built a tunnel that bypasses their own security controls. The WAF becomes an expensive piece of infrastructure that protects against nothing — precisely because the application’s own encryption blinds it.

Security teams using platforms like SecureNexus API POS often discover this pattern during API posture assessments, where encrypted request bodies flag as opaque traffic that cannot be inspected by inline security controls. It is one of the most common indicators that an API’s security architecture has a fundamental design flaw.

| Request Type | What WAF Sees | Actual Payload | Result |

|---|---|---|---|

| Normal (no encryption) | JSON with parameters | JSON with parameters | Inspected |

| Client-side encrypted | Base64 blob | SQL injection | Bypassed |

| Obfuscated + encrypted | Nested encoded string | XSS payload | Bypassed |

| Mobile app encrypted | Binary ciphertext | IDOR manipulation | Bypassed |

The Mobile Variant Is Worse

On mobile platforms, teams often assume that compiled code is harder to reverse-engineer than JavaScript. This is only marginally true. Tools like Frida, Objection, and jadx make it straightforward to hook into encryption functions at runtime, dump keys from memory, or patch the binary to disable encryption entirely. Some teams implement certificate pinning alongside client-side encryption, which adds friction for the attacker but does not change the fundamental problem: the key is in the client, and the client belongs to the attacker.

The mobile context adds another wrinkle. Many mobile security testing frameworks automate the process of identifying and bypassing client-side encryption. What a skilled attacker used to do manually in a few hours, automated tools now accomplish in minutes. The encryption provides almost zero additional time-to-compromise while adding significant complexity to the application.

Scenario: A Fintech Platform’s Expensive Lesson

A mid-sized fintech company runs a customer-facing API serving a React frontend and native mobile applications. During a routine security assessment, the team discovers multiple IDOR vulnerabilities in account endpoints. Changing the account ID in the request reveals other customers’ transaction histories.

Instead of implementing proper server-side authorization checks, the development team decides to encrypt the entire request payload using AES-GCM with a key fetched at login. Their reasoning: if the attacker cannot see the account ID parameter, they cannot tamper with it. The implementation takes three sprints. The team writes encryption libraries for the web frontend, the iOS app, and the Android app. They update the backend to decrypt every incoming request before processing it.

Six months later, a penetration tester extracts the AES key from the JavaScript bundle in under fifteen minutes. They write a simple Python script that encrypts arbitrary payloads using the extracted key. Every IDOR vulnerability still works. But now the WAF — which the company pays significant licensing fees for — cannot detect any of the malicious requests. The SIEM sees no alerts. The API gateway logs show only encrypted blobs with no useful metadata for incident investigation.

The penetration test report notes that the company spent three sprints building infrastructure that made their application harder to defend while leaving the original vulnerabilities completely unpatched.

When the security team later mapped their API landscape using SecureNexus API POS, they found that seventeen additional API endpoints had been deployed during the encryption project — most of them undocumented — including the key-exchange endpoint itself, which had no rate limiting and no authentication beyond a session token. SecureNexus CTEM correlated this with the IDOR findings to show that the actual exposure had increased, not decreased, since the encryption was implemented.

What Security Teams Should Do Instead

Fix the Vulnerability, Not the Visibility

If an endpoint has an IDOR vulnerability, implement server-side authorization. If there is SQL injection, use parameterized queries. If parameters can be tampered with, validate them on the server. Client-side encryption does not fix any of these problems. It hides them from your own security tools while leaving them fully exploitable by anyone willing to spend thirty minutes extracting a key.

Audit for Encrypted Request Patterns

Security teams should actively look for APIs that accept encrypted or encoded request bodies. If the WAF is seeing Base64 blobs instead of structured JSON, something is wrong. This is not a sophisticated detection requirement — it is a configuration and architecture review. API discovery platforms like SecureNexus API POS can identify endpoints where request payloads are opaque and flag them for architectural review before they become blind spots in the security stack.

Ensure WAF Has Visibility

If the application requires application-layer encryption for legitimate reasons — such as end-to-end encryption in messaging — then the architecture must account for WAF inspection. This typically means the WAF or an inline proxy decrypts the traffic before inspection, or the encryption terminates at a point before the WAF inspection layer. The principle is simple: if your security controls cannot see the traffic, they cannot protect it.

Treat Obfuscation and Client-Side Crypto as Indicators

When you discover client-side encryption during an assessment, treat it as a signal that there may be unfixed vulnerabilities underneath. Teams that reach for client-side crypto as a security measure are often avoiding harder fixes. SecureNexus ASM can help identify internet-facing applications that exhibit this pattern, and SecureNexus Vulnerability Management can track whether the underlying vulnerabilities have actually been remediated — not just obscured.

Educate Development Teams

The most effective long-term fix is helping developers understand why client-side encryption does not work as a security control. Most developers implementing this pattern are not negligent — they genuinely believe they are adding security. A thirty-minute demonstration showing key extraction from a JavaScript bundle is usually enough to change minds permanently. Build this into your secure development training and your threat modelling sessions.

Key Takeaways

- Client-side encryption is not a security control. The key is in the client, and the client belongs to the attacker. Any vulnerability that exists behind the encryption is still fully exploitable.

- Encrypting API payloads blinds your WAF. Your most expensive perimeter defence becomes useless when it can only see ciphertext. Attackers get a free pass through the entire inspection stack.

- Client-side crypto is often a symptom of unfixed vulnerabilities. When you find it, look for the root cause it was meant to hide. The real risk is almost always still there.

- Mobile obfuscation buys hours, not months. Frida, jadx, and automated mobile testing frameworks make compiled-code encryption trivially bypassable. Do not treat compilation as a substitute for key management.

- Fix the vulnerability. Remove the encryption. Restore WAF visibility. This is the correct sequence. Anything else increases complexity while reducing security.

The Exposure Management Perspective

Client-side encryption is fundamentally an exposure management problem. The vulnerability exists. The encryption hides it from defenders, not from attackers. This is exactly the kind of misaligned security investment that a CTEM approach is designed to surface.

SecureNexus CTEM correlates findings across the stack — API posture assessments from SecureNexus API POS, external attack surface data from SecureNexus ASM, and vulnerability lifecycle data from SecureNexus Vulnerability Management — to show security leaders not just where vulnerabilities exist, but where the defensive architecture is actually making them harder to detect and respond to. When a WAF is blind to seventeen API endpoints because the application encrypted the traffic, that is an exposure that grows silently until someone tests it.

The fix is almost always simpler than the workaround. Server-side input validation, proper authorization, and parameterized queries are not exciting. But they work — and they let your security infrastructure do its job.

About the Author

Yash Kumar is a Lead in Research & Innovation, focused on exploring emerging technologies and turning ideas into practical solutions. He works on driving experimentation, strategic insights, and new initiatives that help organizations stay ahead of industry trends.